How to Detect Reputation Risk Early

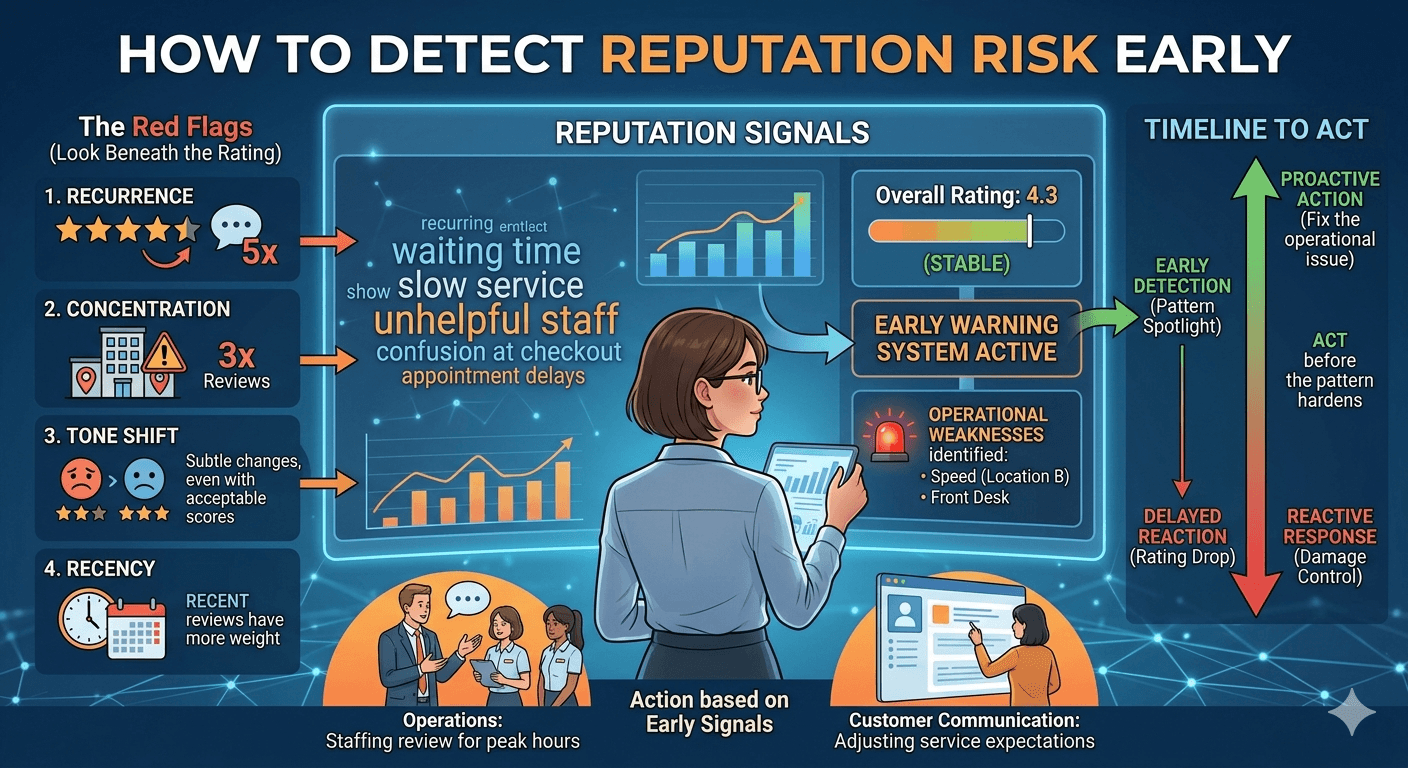

Most teams realize they have a reputation problem only after it becomes visible in the average rating. By that point, the issue has usually been building for weeks. Customers have already repeated the same complaint, frontline teams have already felt the friction, and decision makers are no longer dealing with an early signal. They are dealing with a visible decline.

That delay is what makes reputation risk expensive. The challenge is not understanding that a bad reputation matters. The challenge is spotting the signs early enough to act before the pattern hardens.

In practice, reputation risk rarely starts with a dramatic collapse. It starts with small but repeated shifts in public feedback. A few more complaints about waiting time. A growing number of comments about unhelpful staff. The same issue appearing across several reviews in one location. A subtle change in tone that suggests disappointment is becoming more common even while the rating still looks acceptable.

This is why businesses that depend on public reviews need a way to monitor risk before ratings visibly drop. The goal is not to overreact to every complaint. It is to identify patterns that point to operational or service issues early enough to respond with discipline.

What early reputation risk actually looks like

Early reputation risk is the stage where public feedback is beginning to indicate deterioration, but the decline is not yet obvious in top-level metrics. Many teams miss this phase because they are looking only for major incidents or visible score changes. In reality, risk often appears first in repetition, concentration, and trend direction.

Imagine a multi-location restaurant brand with an overall rating that still looks stable. Leadership sees a 4.3 average and assumes the customer experience is under control. But over the last two weeks, one location has started receiving repeated comments about slow service during lunch and confusion at checkout. The average rating may not move immediately, especially if review volume is high, but the underlying risk is already there.

The same pattern can appear in hospitality, healthcare, retail, education, or any business with public reviews. The specific issue changes by sector, but the structure is usually similar: repeated complaints begin to cluster around one operational weakness before the score reflects it clearly.

That is why early detection depends on reading reviews as a stream of signals, not as isolated comments.

Why ratings tend to lag behind the real problem

Ratings matter, but they are slow. They summarize outcomes after perception has already formed. They do not tell you when an issue started, whether it is isolated, or what caused it. They only confirm that customer experience has already been affected.

A business can accumulate several warning signs before the average score changes enough to attract attention. If review volume is large, a series of negative comments may barely move the visible average. If review volume is small, a problem may still be developing even before enough customers leave feedback to shift the score. In both cases, relying only on the rating creates delay.

There is also a psychological problem. Many teams trust the average because it is easy to communicate. It fits neatly in a report. But convenience is not the same as usefulness. If the average looks stable while complaints around one service issue keep appearing, the average is no longer a sufficient operating signal.

That is why reputation risk should be monitored one layer below the rating itself.

Which review signals should be monitored first

The first signal is recurrence. A single negative review may describe a one-off bad experience. Five reviews across ten days mentioning the same issue are not a coincidence. Repetition is one of the clearest signs that a customer-facing problem is becoming structurally visible.

The second signal is concentration. If the same complaint is concentrated in one location, one shift, one product line, or one service step, that pattern is more actionable than generic dissatisfaction. A concentration pattern helps teams move from “customers seem unhappy” to “this issue is happening here, under these conditions.”

The third signal is tone. Even when wording differs, reviews often reveal the same emotional direction. Customers may mention that service feels rushed, communication feels dismissive, or the overall experience feels unreliable. Tone shifts matter because they often emerge before customers start leaving dramatically low scores.

The fourth signal is recency. A problem that appears repeatedly in recent reviews deserves more attention than an old issue that has not reappeared. Teams should ask not only what customers are saying, but what they are saying now.

The fifth signal is severity. Not every complaint carries the same reputational risk. Comments about inconvenience and comments about trust are not equivalent. For example, occasional remarks about a crowded parking lot do not have the same strategic weight as repeated complaints about billing errors, rude staff, hygiene, cancellations, or misleading expectations.

Together, these signals help distinguish random noise from a pattern that deserves escalation.

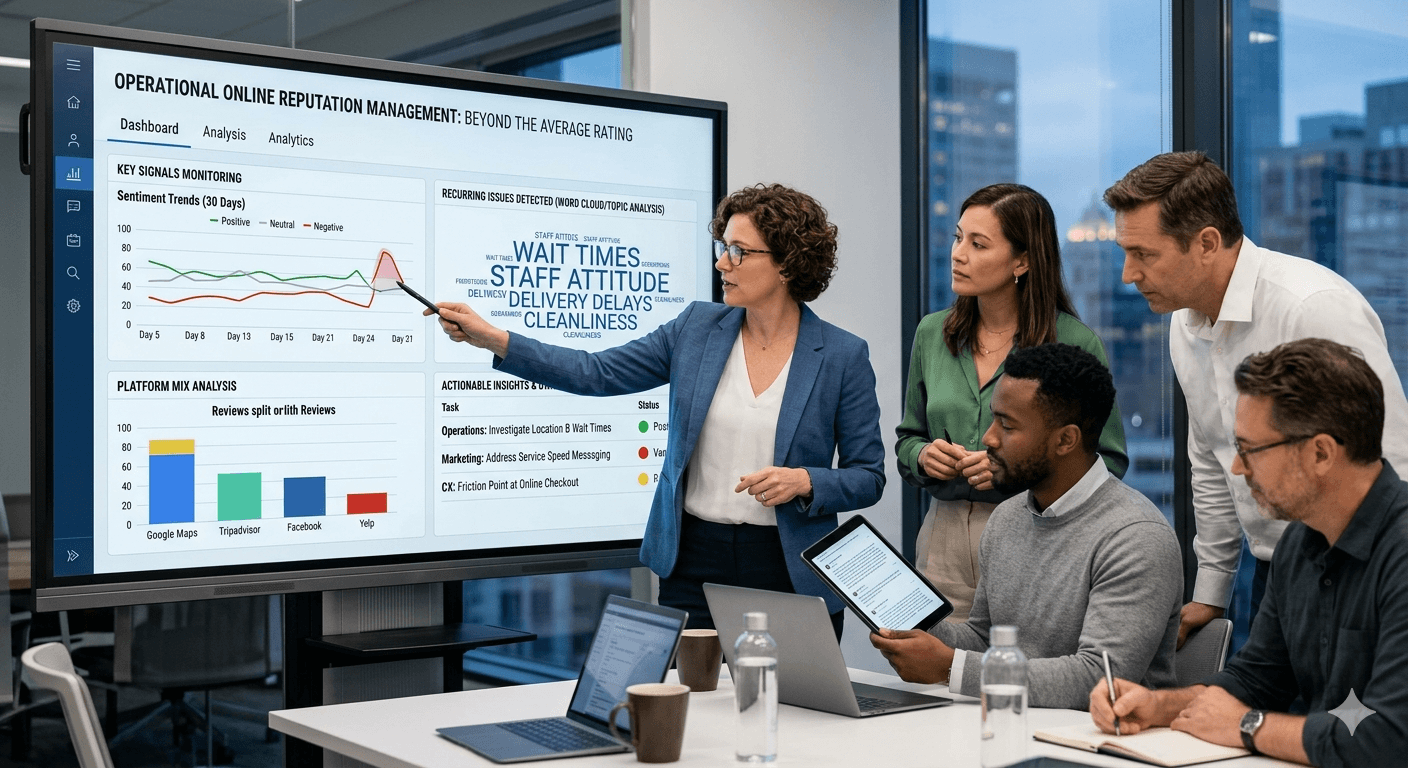

How recurring complaint patterns become an early warning system

The most practical way to detect reputation risk early is to watch for repeated complaint patterns over short windows of time. This does not require advanced analysis to be useful. It requires consistency.

For example, a clinic may begin to see a cluster of reviews mentioning appointment delays, poor communication at reception, and difficulty rescheduling. None of these comments alone proves a systemic problem. But if they appear repeatedly across different patients within a short period, they form an early warning signal. The same logic applies to hotels with recurring check-in complaints, retailers with repeated stock frustration, or delivery businesses with growing comments about missed time windows.

What matters is not only whether a complaint exists, but whether it starts to repeat across different customers and moments. Repetition reduces ambiguity. It turns anecdote into evidence.

That is also where teams can connect public feedback to operations. Repeated complaints point to a process, staffing issue, expectation gap, or service breakdown that is already affecting perception. If you catch that pattern early, you still have time to fix the cause before the score decline becomes harder to reverse.

How to separate isolated complaints from real reputation risk

One of the biggest mistakes in ORM is overreacting to every negative review. Effective monitoring is not about treating each complaint as a crisis. It is about deciding which issues are isolated and which ones signal a broader pattern.

A practical way to make that distinction is to ask four questions. Is the issue repeated by different customers? Is it happening recently? Is it tied to a specific part of the experience? And would a reasonable manager consider it important if it continued for another two weeks?

If the answer to those questions is mostly no, the complaint may be isolated. It still deserves attention, but probably not escalation. If the answer is mostly yes, the business is likely looking at a real reputation risk.

Consider a retail chain receiving one review complaining that a store was unusually busy on a holiday. That is not necessarily a meaningful signal. But if multiple stores receive repeated comments about staff availability, poor checkout communication, and confusion about returns, the pattern is no longer situational. It is operational.

This distinction matters because good ORM depends on calibration. Teams need enough sensitivity to detect risk early, but not so much sensitivity that every complaint creates noise and drains attention.

What teams should do once an early signal appears

Detecting a pattern is only useful if it leads to action. Once an early reputation risk is identified, the next step is not to debate whether customers are being too harsh. The next step is to decide who owns the issue and how the business will verify whether it is improving.

If the repeated complaint points to service speed, operations should review staffing, handoff points, and workload conditions. If the issue points to unclear expectations, marketing or customer communication may need to change. If the problem concentrates in one location, local management should own the response rather than treating it as a network-wide issue.

At the same time, teams should keep monitoring the signal after changes are made. If the complaint pattern slows down, that is evidence the intervention is working. If it continues, the problem may be deeper than first assumed.

This follow-through is what turns monitoring into management. It closes the loop between public perception and internal action. Businesses that do this well are not simply reading reviews. They are using them as an early decision system.

If your team is trying to build that layer more systematically, an online reputation management process helps move from ad hoc review reading to continuous monitoring, prioritization, and response.

Common blind spots that make teams miss reputation risk

A common blind spot is focusing only on one-star reviews. Some of the most valuable warning signs appear in three-star and four-star comments, where customers describe friction in specific detail without writing an obviously hostile review. These comments often contain the clearest explanation of what is starting to go wrong.

Another blind spot is reading feedback without context. A team may scan ten reviews and feel that nothing looks especially serious, but context changes the meaning. If seven of those ten mention long waits, context says the issue is growing. Without aggregation or disciplined review, that pattern is easy to miss.

Another mistake is assuming reputation risk is only external. In reality, reviews often reveal internal operational failures before management reporting catches up. That is why reputational monitoring should not sit only with marketing. Customer experience, operations, and local teams all need visibility where relevant.

Finally, many businesses wait for the quarterly report. That rhythm is too slow for early detection. Reputation risk needs a shorter monitoring cycle, especially for businesses with steady review volume.

Conclusion

Reputation risk usually becomes visible before it becomes obvious. The problem is that many teams are watching the wrong layer. They look at the rating, not the pattern behind it. They notice isolated complaints, but not recurrence. They react to visible decline instead of catching signals early.

Detecting reputation risk early means monitoring review patterns with enough discipline to spot change before the average score tells the whole story. Repeated complaints, recent shifts in tone, emerging service issues, and concentration by location or process all matter more than a static headline metric.

Businesses that get this right do not wait for ratings to collapse before acting. They use reviews as an early warning system, assign ownership quickly, and track whether perception improves after intervention. That is what makes ORM operational rather than reactive.

If you want to move from manual review reading to a more structured operating model, explore our online reputation management approach.

Convierte reseñas en decisiones con review analytics

Si este artículo encaja con tu caso, revisa cómo Analytee estructura sentimiento, temas y prioridades de mejora a escala.

Explorar review analytics