What Online Reputation Management Looks Like in Practice

Most businesses with public reviews already know their reputation matters. The problem is that many still manage it in a reactive way. They look at the average rating, read a few recent comments, reply when a complaint becomes visible, and assume that is enough to stay in control.

It usually is not.

Online reputation management, or ORM, is not just about protecting image after something goes wrong. In practice, it is the ongoing discipline of monitoring public feedback, identifying signals early, deciding what matters, and turning that information into action across marketing, operations, customer experience, and leadership.

That distinction matters because reputation is rarely damaged by one isolated review. More often, it shifts through repeated patterns that teams fail to see in time. A drop in perceived service speed, recurring complaints about staff attitude, a growing gap between locations, or a sudden increase in negative comments around one issue can all point to a real operational problem before the average rating makes it obvious.

This article explains what online reputation management actually looks like when it is handled as an operating discipline rather than a loose collection of replies, reports, and assumptions.

What online reputation management is, and what it is not

Online reputation management is the process of monitoring how a business is perceived across public digital touchpoints, understanding what is shaping that perception, and acting on those signals in a structured way.

That definition is broader than review response management, and narrower than brand marketing.

ORM includes tracking review trends, identifying recurring issues, watching for negative shifts, understanding what customers consistently praise or criticize, and deciding how to respond at both the communication level and the operational level. It is not limited to damage control. In fact, if a team only thinks about ORM when there is already a visible problem, it is usually too late.

It also helps to be clear about what ORM is not.

It is not just answering reviews. Responses matter, but they are only one layer. A well-written reply does not solve a repeated service issue.

It is not just watching the average star rating. Ratings summarize outcomes, but they hide context. Two businesses can both have 4.2 stars and face completely different reputational risks.

It is not only a marketing responsibility. Marketing may own visibility and messaging, but many reputation problems are created in operations, service delivery, staffing, or customer experience.

And it is not the same as deep review analytics. Review analytics can support ORM, but ORM is the operating function that decides what to monitor, what to escalate, what to fix, and what to track over time.

That is why ORM works best when it is treated as a management layer, not as a reporting layer.

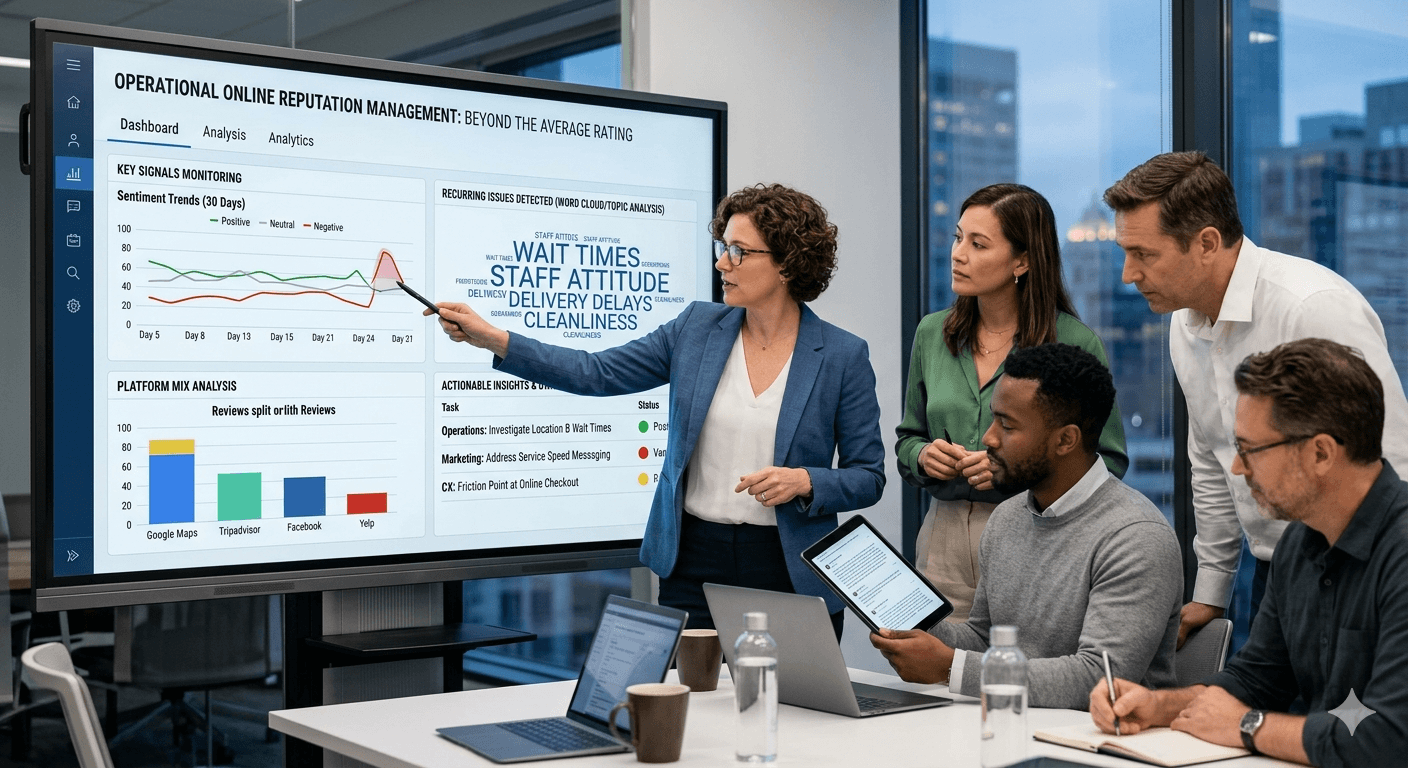

What signals make up online reputation

Reputation is shaped by more than one number. If a team wants to manage it properly, it needs to look at a group of signals together rather than relying on a single indicator.

The most visible signal is the average rating. It is useful because it influences first impressions and often affects click-through behavior on listing platforms. But by itself, it only shows the surface.

Review volume is another important signal. A high rating based on a very small number of recent reviews is not the same as a stable rating supported by consistent feedback over time. Sudden changes in volume can also matter. A spike in negative reviews may reflect a real issue, but so can a sudden drop in review activity if customer experience has become inconsistent.

Recency matters as well. A business may still carry a strong average score while its last 30 or 60 days of feedback show a clear decline in quality. In practice, customers often pay attention to what is happening now, not what happened eighteen months ago.

Then there is review content. This is where operational meaning appears. Repeated mentions of waiting time, cleanliness, delivery problems, staff attitude, billing confusion, or product inconsistency usually reveal more than the score alone. If the same complaint appears across different customers and different days, it is not noise. It is a signal.

Platform mix also matters. A business may look strong on one source and weak on another. Google Maps, Tripadvisor, Facebook, Yelp, app stores, and sector-specific directories often attract different kinds of feedback. If problems concentrate in one source, that can indicate either a real audience-specific issue or a platform-specific exposure risk.

Finally, trend direction matters more than static snapshots. Reputation is not only about where you are. It is about whether perception is improving, stabilizing, or deteriorating.

A good ORM process looks at all of these signals together.

Why the average rating is not enough

Many teams reduce reputation to one question: “What is our rating?”

That is understandable, because ratings are visible and easy to compare. They offer a fast summary, and they are the metric most people outside the business notice first. But as a management tool, the average rating is incomplete.

The first problem is that averages compress very different realities into the same number. A restaurant with 4.3 stars may be improving after fixing staffing issues. Another restaurant with the same 4.3 may be declining because complaints about delays have increased for three weeks. The same score can represent recovery in one case and growing risk in another.

The second problem is that averages react slowly. By the time the visible rating drops enough to trigger concern, the underlying issue may already be well established. If customers have been complaining about delivery delays, inconsistent service, or missing product availability for weeks, the rating is only confirming a pattern that already existed.

The third problem is that ratings do not explain causality. A business cannot improve reputation by acting on a number alone. It needs to know what is behind the score. Is the decline driven by one location? One team? One process? One service line? One recent operational change? Without that layer, teams end up discussing reputation in vague terms instead of making targeted corrections.

A simple example shows why this matters. Imagine a hotel group with a stable rating of 4.1 across its properties. Leadership sees the average and assumes performance is acceptable. But recent review content shows that one location is receiving repeated complaints about check-in delays and room cleanliness, while another location is being praised for staff friendliness and speed. The overall rating hides both a problem and a model worth replicating.

That is why ORM requires more than a dashboard headline. It requires context.

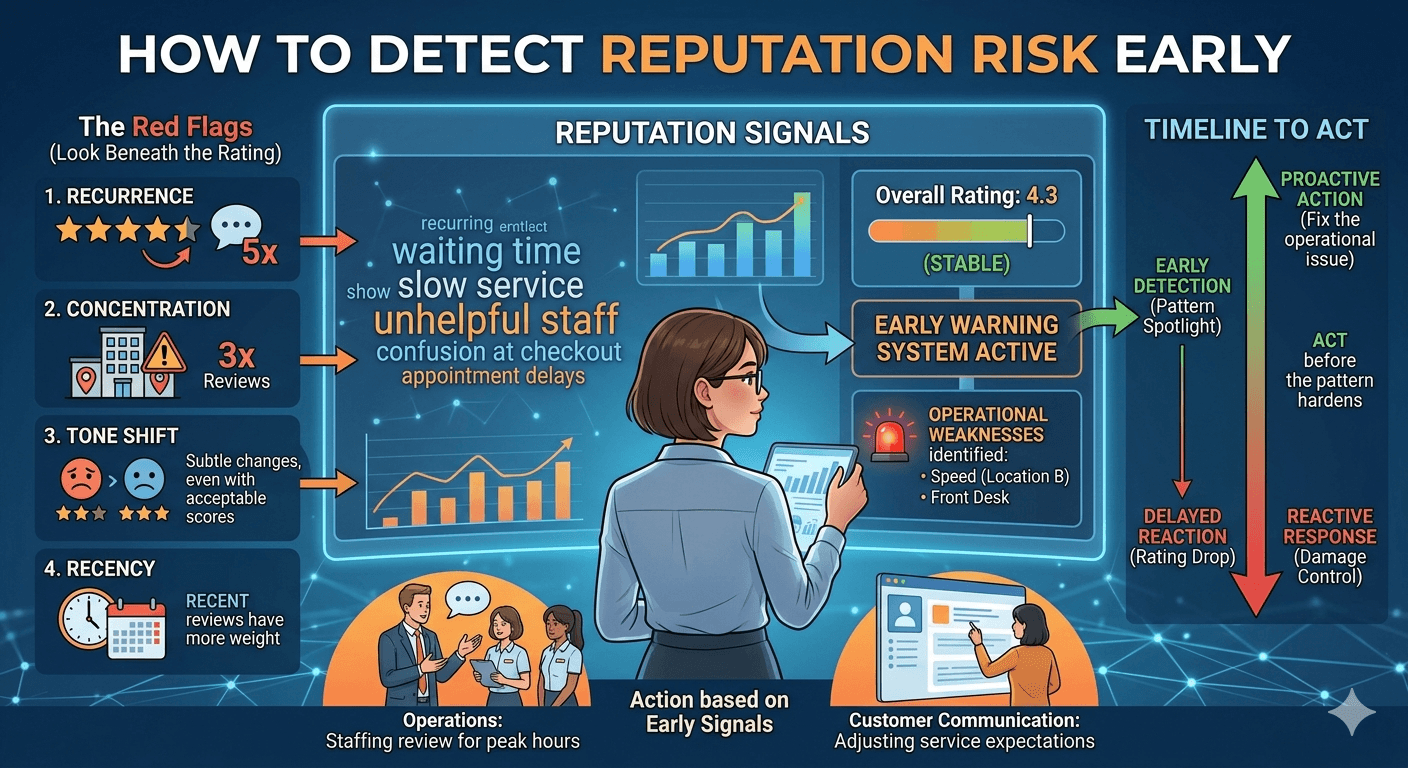

How to monitor reputation risk through reviews

Monitoring reputation risk does not mean staring at review sites all day. It means building a repeatable way to spot changes that matter before they become harder to reverse.

The first step is defining what counts as a meaningful signal. Not every negative review is a risk event. A single complaint about a bad day is not the same as a recurring pattern about poor service, refund issues, or product quality. Teams need to distinguish isolated feedback from repeated evidence.

The second step is watching for concentration. Risk becomes more relevant when the same theme appears repeatedly across multiple reviews, especially in a short time frame. If several customers mention long waits in one week, that may point to an operational bottleneck. If multiple reviewers mention rude staff at one location, that suggests a local management issue rather than random dissatisfaction.

The third step is monitoring change over time. Reputation risk is often visible in direction before magnitude. For example, the average score may still look acceptable, but the proportion of clearly negative comments may be rising. Or the volume of complaints around one issue may increase even while ratings remain stable. That kind of early movement matters because it gives teams time to respond before the problem becomes more expensive.

The fourth step is assigning ownership. Reviews create value only when somebody knows what to do next. If complaints about delivery times belong to operations, they should not sit unresolved in a marketing report. If negative sentiment is linked to unclear expectations in ads or listings, marketing may need to adjust messaging. If customer support is involved, support leaders need visibility too.

The fifth step is tracking response after intervention. ORM is not complete when a problem is identified. It becomes useful when teams can see whether the change they made improved perception. If staffing was adjusted, did waiting-time complaints decrease? If a process changed, did customers stop mentioning confusion? Without that follow-up, the business is only observing reputation, not managing it.

This is where ORM becomes practical. It closes the gap between public feedback and internal action.

Which teams use ORM, and for what

One reason ORM is often underdeveloped is that businesses assume it belongs to one department. In practice, several teams benefit from it, each for different reasons.

Marketing uses ORM to understand public perception, protect acquisition efficiency, and make sure brand promises match the customer experience. If reviews repeatedly mention something the brand claims to do well, marketing can reinforce it. If reviews expose a credibility gap, that gap needs attention before messaging gets stronger.

Customer experience teams use ORM to identify friction points at scale. Reviews often surface the same recurring issues that surveys or support tickets reveal, but from a public perspective. That makes them especially valuable because they show what customers are willing to say when they are not filling out an internal form.

Operations teams use ORM to identify where execution is failing. Complaints about wait times, inconsistency, service speed, cleanliness, delivery, or stock issues often point to operational failures more directly than internal reporting does. ORM helps them see which problems are visible to customers and how frequently they are being repeated.

Reputation or communications teams use ORM to determine when a business needs a public response, when an issue is isolated, and when there is a broader pattern that requires escalation.

Leadership uses ORM for prioritization. Public feedback can show whether a problem is localized, structural, growing, or already damaging trust. That helps determine where to invest management attention and resources.

The common thread is simple: ORM is most useful when it supports decisions, not just observation.

Common mistakes in online reputation management

A frequent mistake is reacting only to the loudest reviews. A dramatic one-star comment may draw attention, but repeated three-star complaints about the same issue can be more important because they reveal a broader pattern.

Another mistake is treating every platform the same way without context. A business may have different customer expectations on Google Maps than on Tripadvisor or an app store. ORM works better when teams understand what each source is telling them, not just how many stars appear on each one.

A third mistake is focusing too heavily on response wording. Good review responses are useful, especially when they show accountability and professionalism. But if a business invests more in polished replies than in fixing the recurring issue behind those replies, ORM becomes cosmetic.

Many teams also make the mistake of reviewing reputation too infrequently. Monthly reporting may be enough for executive visibility, but not for early signal detection. If a problem grows quickly, waiting for the end of the month can delay action.

Another common error is separating reputation from operations. If review monitoring lives in one report and operational change happens somewhere else, the business sees the signal but fails to absorb it.

And finally, some businesses still rely too much on intuition. Leaders may say things like “I don’t think this is a real issue” even when the same complaint appears across many comments. ORM brings discipline by replacing anecdotal interpretation with repeated external evidence.

Conclusion

Online reputation management is not a branding extra. It is a practical operating discipline for businesses that are publicly reviewed and need to understand how perception evolves over time.

Handled properly, ORM helps teams look beyond the average rating, detect problems earlier, distinguish isolated complaints from meaningful patterns, and connect public feedback to action. It creates a more reliable way to decide what deserves attention, what should be escalated, and what should be monitored after changes are made.

That is what online reputation management looks like in practice: not image control in the abstract, but a structured way to monitor signals, reduce uncertainty, and make better decisions from public feedback.

If your team is still reading reviews manually or relying mostly on average ratings, that usually means the reputation signal exists, but the operating system around it does not.

If you want to turn review monitoring into a repeatable operating discipline, explore our online reputation management solution.

Monitoriza y mejora tu reputación online

Pasa de la lectura puntual a una capa continua de ORM con alertas, scoring reputacional y prioridades de actuación.

Explorar online reputation management